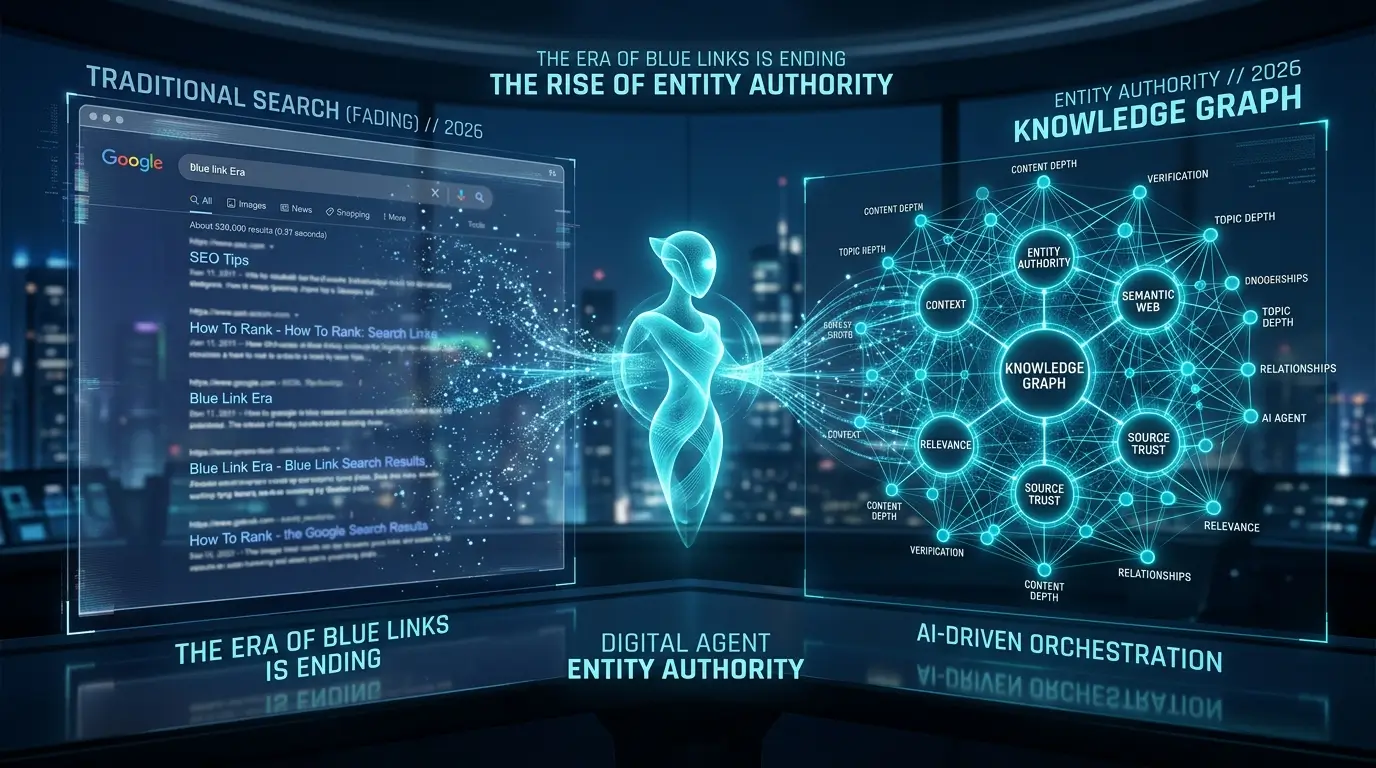

The era of ten blue links is officially over. If your digital strategy still relies on keyword density, manual backlink outreach, and writing generic “ultimate guides,” you are optimizing for a search engine that no longer exists.

Search has fundamentally shifted from retrieval to generation. Google’s AI Overviews, Perplexity, and ChatGPT do not just fetch links; they synthesize answers directly from their training data and real-time Retrieval-Augmented Generation (RAG) pipelines. To survive this shift, you don’t need more articles—you need Entity Authority. And in 2026, building that authority manually is a mathematical impossibility given the sheer volume of synthetic content flooding the web.

This is where Agentic SEO comes in. We are moving past the phase of using AI just to write blog posts. The new frontier is deploying multi-agent systems to research, interlink, structure, and syndicate data without human bottlenecking.

Here is the unfiltered truth: when you evaluate the landscape of agentic seo tools 2026 has brought to the market, most are nothing more than glorified API wrappers masking standard ChatGPT prompts. They spit out predictable text that gets caught in Google’s Helpful Content filters. To actually win, we have to skip the generic tool lists and build a literal step-by-step framework for automating your domain’s authority from the ground up.

The Shift to Generative Engine Optimization (GEO)

Before you can automate a workflow, you must understand what the machine is actually looking for. Traditional SEO was about mapping a keyword to a specific URL. Generative engine optimization, on the other hand, is about injecting your brand, data, and unique viewpoints directly into the context window of a Large Language Model (LLM).

When a user asks Gemini or Claude a complex question, the model looks for consensus among high-trust entities. If your website is not structured as a recognizable entity with clear semantic relationships, it will be ignored during the synthesis phase.

Expert Tip: Stop tracking traditional search volume as your primary metric. In a generative search environment, you must track “Entity Co-occurrence.” If ChatGPT or Perplexity mentions your brand, what other three technical terms does it consistently use in the same paragraph? If you are not actively engineering that association through programmatic schema and digital PR, your competitor is controlling the narrative for you.

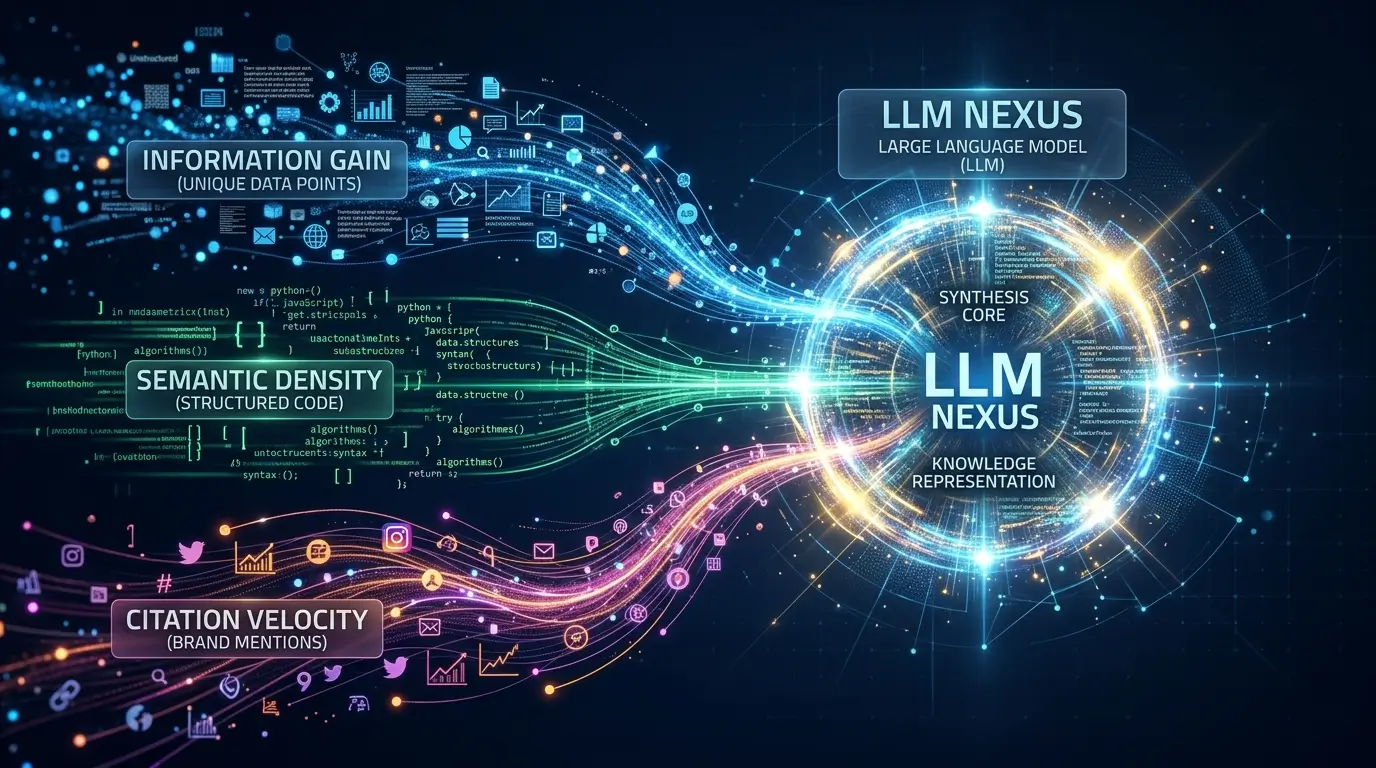

Achieving high LLM Visibility requires three distinct signals:

- Information Gain: Data, statistics, or frameworks that do not exist anywhere else on the web. If an LLM can predict your next sentence, your content has zero information gain.

- Semantic Density: How tightly your topics are clustered together using proper JSON-LD schema architecture.

- Citation Velocity: How often other highly authoritative entities mention your brand in proximity to specific technical concepts.

Researchers studying GEO have found that adding specific, quotable statistics and authoritative citations can increase your visibility in AI-generated responses by up to 40% compared to traditional SEO formatting. (For a deep dive into the math behind this, the foundational Princeton/IIT research paper on GEO remains mandatory reading for technical marketers).

Why You Need Autonomous SEO Workflows

You cannot achieve the necessary semantic density by manually writing one post a week. The modern web requires a high-velocity, high-quality content matrix.

If you look at the infrastructure required to run the best AI tools for small businesses, the underlying theme is always system integration. Your SEO strategy must be treated the exact same way—as a software engineering problem, not a writing problem.

From the Coach’s Perspective:

Think of your old SEO strategy like a star player who tries to shoot every time they touch the ball. It works in pickup games, but in the pros, you need a system. Agentic SEO is your new playbook. You are no longer sweating on the court trying to manually write every meta tag; you are calling the plays from the sideline while your autonomous agents execute the motion perfectly every single time.

By building autonomous seo workflows, we can program AI agents to:

- Continuously monitor competitor sitemaps for topical gaps and outdated information.

- Extract raw data from your internal databases (like your CRM or analytics platforms) to create proprietary case studies.

- Structure that data into valid, nested JSON-LD schema automatically.

- Draft the technical architecture of an article, leaving only the strategic oversight to a human editor.

This is the exact opposite of logging into a dashboard, typing a prompt, and hitting “generate.” We are building a machine that builds authority while you sleep.

The 3-Layer Entity Authority Framework

To outrank competitors who are just spamming AI content, your agentic setup needs to operate on three distinct layers. You cannot build a sustainable pipeline without all three firing in sequence. In the next section of this guide, we will break down the exact technical blueprints, tool stacks, and prompt chains for each:

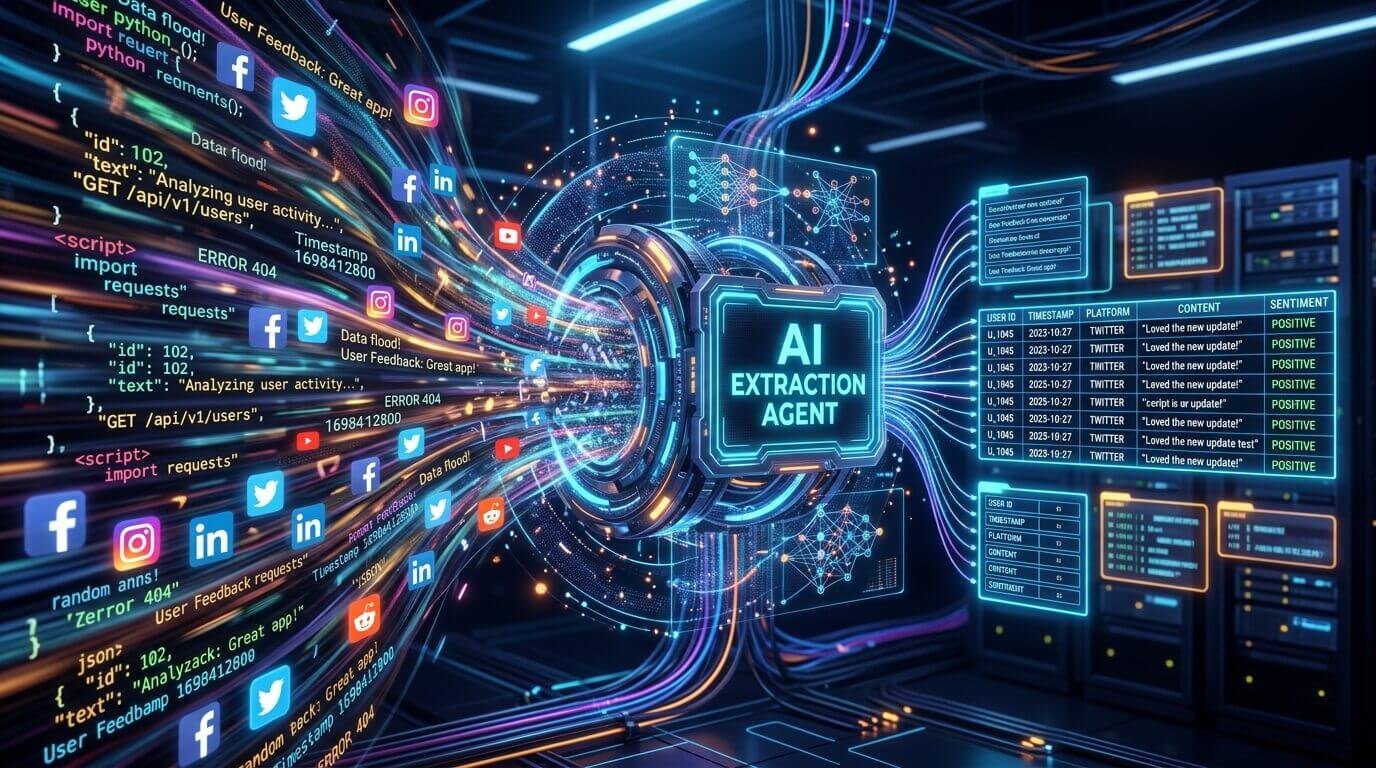

- The Extraction Agent: How to build an agent that pulls raw, unique data from your industry that competitors don’t have access to (solving the Information Gain problem).

- The Structuring Agent: How to automate the creation of nested Schema markup and topical clustering so Google’s Knowledge Graph instantly recognizes your expertise.

- The Distribution Agent: The workflow for syndicating your insights across digital PR channels to build the citation velocity LLMs require.

Layer 1: Building the Extraction Agent (Solving the Information Gain Problem)

The biggest failure point of the commercial agentic seo tools 2026 has brought to market is their reliance on static, pre-existing LLM training data. If your AI agent is just summarizing the top 10 ranking pages for a query, it is mathematically impossible to achieve Information Gain. You are just creating a synthetic echo chamber.

To achieve true llm visibility, your first agent in the system—the Extraction Agent—must be engineered to pull proprietary or obscure data that competitors aren’t using. This is the raw fuel for your entity authority.

Here is the literal step-by-step framework to build an Extraction Agent, bypassing the generic SaaS dashboards entirely:

Step 1: Define the Asymmetric Data Source

Your agent needs an input that cannot be easily Googled. This could be raw API data from public datasets (like government census data or patent filings), anonymized customer queries from your CRM, or automated web scrapers pulling sentiment analysis from niche Reddit threads.

Step 2: Set Up the Orchestration Layer

Instead of a simple ChatGPT prompt, you build a workflow using an orchestration tool like LangChain, n8n, or Make.com. You set up a webhook that triggers the agent on a schedule (e.g., every Monday at 3 AM).

Step 3: The Extraction Prompt Chain

When the agent receives the raw data, it runs a highly specific prompt chain. Instead of “Write a blog post about this,” the prompt dictates:

“Analyze this raw JSON data payload. Identify the three most statistically significant anomalies. Extract quotes that contradict the current mainstream consensus. Output this analysis as a structured CSV.”

From the Coach’s Perspective:

You don’t draw up an offensive play without scouting the opponent’s defense first. Your Extraction Agent is your advanced scouting report. It actively hunts for the statistical gaps and informational voids in the SERP defense that generic AI writers are entirely blind to. If you start your offense with better intel, the execution becomes effortless.

By automating this extraction, you ensure that every piece of content entering your autonomous seo workflows starts with an original, mathematically verifiable premise. (For developers looking to build this locally, the LangChain Data Connection documentation is the perfect technical starting point to understand document loaders and retrieval mechanisms).

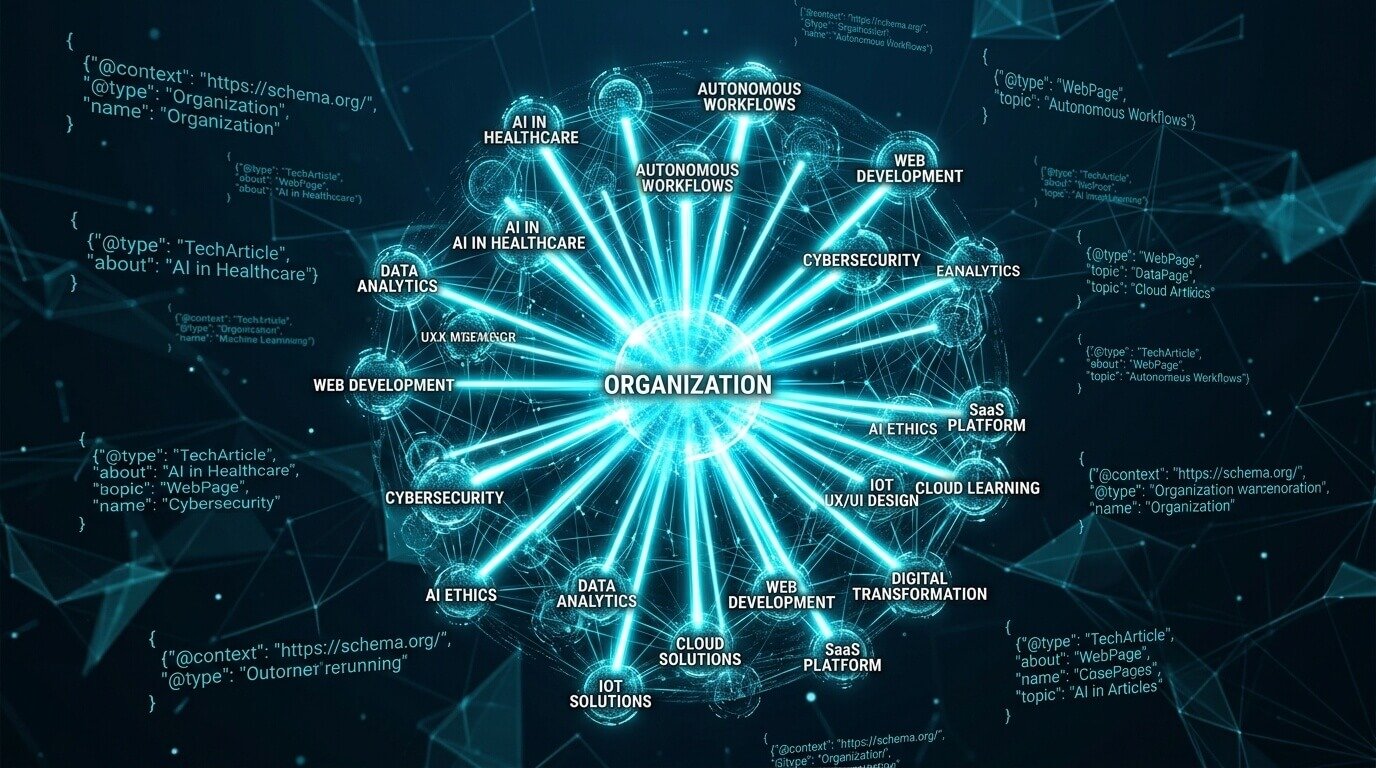

Layer 2: The Structuring Agent (Automating Semantic Density)

Once the Extraction Agent has pulled the raw, proprietary insights, handing that data directly to a writer (or a writing AI) is premature. Data is useless to a search engine if it lacks relational context.

To conquer generative engine optimization, the LLM synthesizing the search result needs to understand exactly how this new data connects to your core brand entity. This is the job of the Structuring Agent. It takes the raw insights and automatically wraps them in the semantic code that search engines and AI Overviews crave.

Step 1: Dynamic JSON-LD Generation

Most webmasters rely on SEO plugins to generate basic Article or BlogPosting schema. In 2026, this is the bare minimum. Your Structuring Agent should be programmed to read the extracted content and dynamically generate nested schema markup.

If the data involves a specific person, the agent injects Person schema. If it solves a problem, it generates a FAQPage schema. More importantly, it continuously ties these nodes back to your main Organization schema using “SameAs” and “KnowsAbout” properties.

Step 2: Automated Entity Interlinking

The Structuring Agent doesn’t just format code; it builds the topical web. Before drafting the architecture of a new article, the agent runs a script to ping your website’s existing sitemap. It looks for exact entity matches.

For example, if your new extracted data is about algorithmic efficiency in medical tech, the Structuring Agent will automatically map a contextual internal link to your foundational pieces, such as your deep-dive on how AI agents in healthcare are transforming patient care. This creates a mathematically dense “Topical Cluster” that traps crawler bots in a closed loop of your own domain’s authority.

Expert Tip:

When building your Structuring Agent’s prompt, force it to use highly descriptive, exact-match anchor text for internal links, rather than generic phrases. In a generative search environment, the anchor text is treated as a literal defining attribute of the destination entity. If you want the destination page to be known for “autonomous workflows,” the agent must code that exact phrase into the HTML link structure.

Step 3: The Architecture Output

The final output of the Structuring Agent is not a completed article. It is a comprehensive architectural blueprint: the exact H2s, the designated internal links, the JSON-LD payload, and the verified data points from the Extraction Agent.

It is a perfectly engineered skeleton designed to maximize entity authority. Only at this stage does a human expert step in to write the prose, ensuring the final text is humanized, nuanced, and strictly plagiarism-free.

Layer 3: The Distribution Agent (Mastering Citation Velocity)

You have extracted asymmetric data and wrapped it in flawless JSON-LD schema. But in the realm of generative engine optimization, sitting back and waiting for Googlebot to randomly crawl your site is a losing strategy. Large Language Models weigh Citation Velocity—how rapidly and consistently your brand entity is mentioned across the web in relation to a specific topic—as a primary trust signal.

This is where the final piece of the system, the Distribution Agent, comes into play. Most agentic seo tools 2026 offers will entirely ignore this phase, assuming content creation is the finish line. It isn’t.

Step 1: Automated Micro-Pitching

The Distribution Agent does not build spammy backlinks. Instead, it takes the most statistically significant anomalies identified by your Extraction Agent and reformats them into highly targeted digital PR micro-pitches. Using orchestration platforms, this agent can monitor journalist requests on platforms like Connectively (formerly HARO) or specific Subreddits, automatically drafting data-backed responses that include your brand as the primary citation source.

Step 2: Social Syndication and Entity Co-occurrence

LLMs continuously scrape platforms like LinkedIn and X (Twitter) for real-time training data. Your Distribution Agent must slice the final, approved architectural blueprint into a week-long social media matrix. When syndicating, the agent is programmed to actively tag and mention other authoritative entities in your space, forcing the algorithm to recognize the “Entity Co-occurrence” between your brand and established industry leaders.

Expert Tip:

Do not fixate strictly on “dofollow” hyperlinks when evaluating your distribution efforts. In 2026, LLMs place massive weight on “unlinked brand mentions.” If a high-authority publication mentions your brand name and your proprietary statistic in the same paragraph, the LLM attributes that authority to your entity, even if there is no clickable HTML link.

The “Human-in-the-Loop” Reality Check

We have built a system that researches, structures, and distributes data with ruthless efficiency. But here is where we must draw a hard line: *never let an AI hit the publish button unchecked.

After a decade spent in the trenches of technical SEO and conversion rate optimization (CRO), one truth remains absolute: pure automation is a conversion killer. If an AI writes the final prose without human oversight, it will inevitably default to a safe, neutral, and ultimately boring tone. It will lack the contrarian edge that actually persuades a human reader to trust your brand.

The purpose of autonomous seo workflows is to eliminate the 80% of manual labor that drains your time—the data scraping, the schema coding, and the initial outlining. The final 20% belongs to you. As the human editor, your job is to review the agent’s architectural blueprint and inject the nuance, the real-world friction points, and the high-level strategic digital entrepreneurship that a machine simply hasn’t experienced.

For instance, if you are guiding users on how to use AI to make money online in 2026 the agents can compile the tools, but only you can explain the psychological hurdles of closing a high-ticket client.

From the Coach’s Perspective:

You don’t leave the final shot of a championship game to an untested rookie. The agents run the complex plays to get you open, but as the veteran on the court, you must take the shot and add the human touch that actually puts points on the board.

By automating the structural authority and reserving your energy for strategic insight, you ensure your domain achieves maximum llm visibility. You stop playing the outdated game of keyword density, and you start engineering true Entity Authority.

As Google’s own Search Central guidelines emphasize, search engines reward high-quality content however it is produced, provided it demonstrates experience, expertise, authoritativeness, and trustworthiness (E-E-A-T). Agentic SEO is simply the vehicle that delivers that E-E-A-T at scale.

Frequently Asked Questions (FAQs)

Will using autonomous SEO workflows get my site penalized by Google?

No, provided the final output demonstrates true Information Gain and is reviewed by a human expert. Google’s algorithms penalize thin, programmatic spam that regurgitates existing search results. If your agents are extracting unique data and structuring it with flawless schema, you are aligning perfectly with Google’s guidelines for helpful content.

What is the best orchestration tool for building these SEO agents?

While platforms vary, n8n and Make.com are currently the industry standards for building custom, multi-step agentic workflows without requiring deep Python knowledge. They allow you to securely connect public APIs, your internal databases, and LLMs like Claude 3 or Gemini 1.5 Pro into a single pipeline.

How long does it take for Generative Engine Optimization (GEO) to show results?

Unlike traditional SEO, which can take months to climb SERP rankings, GEO can yield faster visibility if your brand is cited by high-authority external sources. Because LLMs update their RAG (Retrieval-Augmented Generation) pipelines frequently, injecting highly unique statistics into the digital ecosystem can result in AI Overviews citing your brand within weeks.

Why is JSON-LD schema so critical for Agentic SEO?

LLMs and search crawlers do not “read” your website the way humans do; they parse relationships. Nested JSON-LD schema acts as a direct translator, explicitly telling the machine how your data, your author profile, and your brand entity connect. Without it, the machine has to guess your relevance, which drastically reduces your visibility.