Introduction:

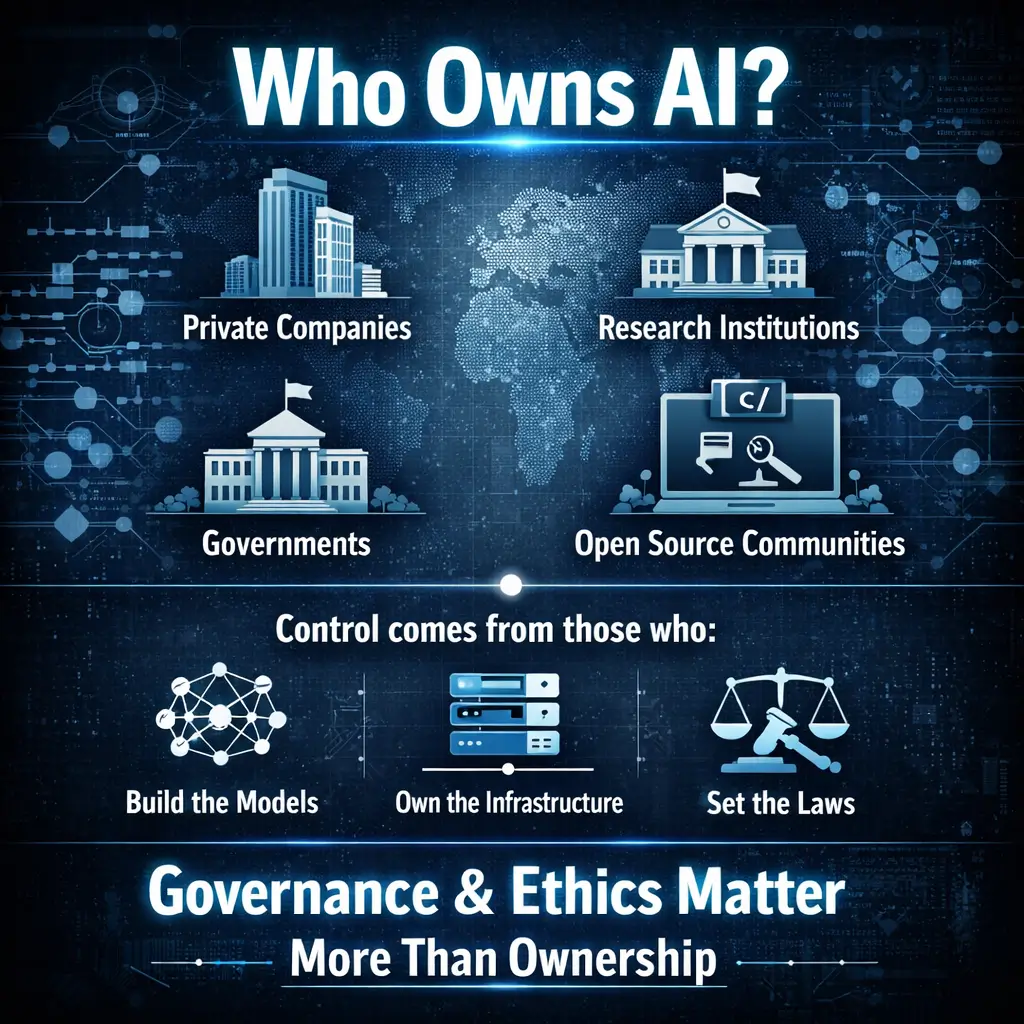

Artificial intelligence has moved from research labs into everyday life. It powers search engines, recommendation systems, medical tools, and business software. As AI becomes more influential, a common question arises: who owns AI?

This question is not only about companies and profits but also about control, accountability, and the future of technology. Understanding ownership in AI requires looking beyond simple legal definitions and examining how AI systems are created, governed, and deployed.

What Does Ownership Mean in Artificial Intelligence?

Before answering who owns AI, it is important to clarify what ownership actually means in this context. AI is not a single object or invention. It is a combination of algorithms, data, infrastructure, and human expertise. Ownership can apply to different layers of this system.

At one level, companies may own the code and models they develop. At another level, data ownership may belong to users, organizations, or governments. Infrastructure such as cloud servers is owned by separate providers. This layered structure makes AI ownership complex and often misunderstood.

Who Owns AI at the Company Level

Who owns AI models developed by private companies

Most advanced AI systems today are developed by private technology companies. These companies legally own the AI models they build, including the underlying architecture and trained parameters. Examples include OpenAI, Google, Microsoft, Meta, and Amazon.

These organizations invest heavily in research, talent, and computing resources as part of large-scale AI research and infrastructure investment. As a result, they retain ownership over their AI systems, allowing them to control AI model licensing and usage, including decisions about access, deployment, and restrictions.

Who owns AI when it is offered as a service

In many cases, AI is not sold as a product but provided as a service. Users interact with AI tools through applications or APIs. Even though individuals and businesses rely on these tools, ownership remains with the company that built and maintains the AI system.

This means users do not own the AI itself. They only have permission to use it under specific terms. This distinction is important when discussing control and responsibility.

Who Owns AI Data and Why It Matters

Who owns AI training data

Data is the foundation of modern AI. Training data may come from public sources, licensed datasets, user interactions, or proprietary collections. Ownership of this data varies depending on its source.

Public data may not be owned by a single entity, but its use can still be regulated by law. Licensed data remains the property of the original owner. User generated data may belong to individuals, although platforms often receive usage rights through agreements.

This creates ongoing debates about consent, privacy, and fairness. When asking who owns AI, understanding who owns AI training data is just as important as model ownership.

Who owns AI outputs

Another common question is who owns the content generated by AI. In many jurisdictions, AI generated output does not receive the same legal protection as human created work. Ownership often depends on platform terms and local laws.

This uncertainty highlights the evolving nature of AI governance and intellectual property.

Who Owns AI in OpenAI’s Case

Who owns AI at OpenAI specifically

OpenAI is often discussed when asking who owns AI because of its unique structure. OpenAI is governed by a nonprofit organization that controls a for profit entity. This structure was designed to balance commercial sustainability with long term public benefit.

The nonprofit board holds final authority, meaning no single investor or individual owns OpenAI outright. While major partners have influence, control remains centralized within the nonprofit governance model.

This approach is different from traditional tech companies and reflects an attempt to manage AI responsibly at scale.

Who Owns AI at the Government and National Level

Who owns AI developed by governments

Governments around the world develop and use AI for defense, healthcare, transportation, and public services. AI systems created by government agencies are typically owned by the state.

However, governments often rely on private contractors to build these systems through public–private AI partnerships. In such cases, ownership may be shared or defined through contracts that govern AI intellectual property rights and data usage. This raises questions about algorithmic transparency, accountability, and public oversight of government AI systems.

Who owns AI in national strategies

Some countries treat AI as a strategic national asset. They invest in research, regulate usage, and influence development through policy. While governments may not own all AI systems directly, they play a significant role in shaping who controls them.

Who Owns AI in Open Source Projects

Who owns AI when it is open source

Not all AI is owned by corporations. Open source AI models are released publicly, allowing anyone to study, modify, and use them. In these cases, ownership is shared under open licenses.

While no single entity controls the model, governance often remains with a foundation or core group of developers. Open source AI promotes transparency and collaboration, but it also introduces challenges related to misuse and accountability.

Who owns AI responsibility in open source systems

Even without traditional ownership, responsibility still exists. Developers, deployers, and users all share ethical and legal obligations. This distributed model contrasts sharply with corporate owned AI systems.

Who Owns AI Power and Decision Making

Who owns AI control in practice

Legal ownership does not always equal practical control. Control over AI comes from access to computing power, data, and deployment channels. Large technology companies dominate these resources, giving them significant influence over how AI is used globally.

This concentration of power raises concerns about fairness, competition, and societal impact.

Who owns AI accountability

When AI systems cause harm or make errors, accountability becomes critical. Ownership plays a key role in determining who is responsible. In most cases, responsibility falls on the organization that owns and deploys the AI, not the AI itself.

Clear accountability frameworks are still developing, especially as AI systems become more autonomous.

The Ethical Question Behind Who Owns AI

Why who owns AI matters ethically

The question of who owns AI is not just legal or technical. It is deeply ethical. Ownership determines who sets priorities, who benefits financially, and who bears responsibility for risks.

If AI is controlled by a small number of powerful entities, societal outcomes may reflect narrow interests. Broader participation and oversight can help align AI development with public values.

Can AI ever own itself

Some speculate about AI owning itself or acting independently. Under current legal systems, AI cannot own property or hold rights. AI remains a tool created and controlled by humans and organizations.

This reality reinforces the importance of human responsibility in AI governance.

Conclusion: Who Owns AI and What Comes Next

So, who owns AI? The answer depends on perspective. Companies own most commercial AI models. Governments own AI developed for public use. Open-source communities share ownership through open-source AI licenses, allowing broader access and collaboration. Meanwhile, data ownership in artificial intelligence varies by source, and real control often lies with those who manage AI infrastructure and deployment.

Artificial intelligence is not owned by any single entity. Its development and influence are shared among innovators, organizations, governments, and the global community that shapes its direction and use.

Ultimately, ownership of AI is defined by accountability. The decisions made today around governance, ethics, and application will determine how responsibly and effectively it serves society in the future.